Neural Hardware[]

If the yearly performance gain of digital computers will be governed by Moore's law forever, it will take at least another half-century to reach the necessary computing power to simulate larger parts of the brain. If the model should include development, this simulation gap will widen even more. There is a strong need from the neuroscience community for systems that allow the modelling of moderately sized neural microcircuits including synaptic plasticity and cellular diversity. There are several approaches to fulfill these needs:

- Using parallel programming techniques on a computer cluster

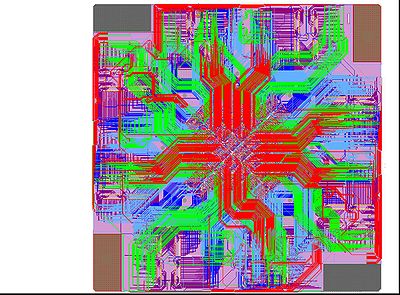

- Developing a specialized digital system based on FPGA or ASIC technology

- Creating a physical VLSI model of the neural circuit under investigation

(Brain Mind Institute, EPFL Lausanne)

By creating specialized digital hardware processors it might be possible to gain an advantage over microprocessor-based systems. Still, it is unlikely that this will be more than an order of magnitude, since they are based on the same technology as microprocessors: The neural circuits remain to be realised with numerical solutions of differential equations. The biggest problem lies in the fundamentals of Moore's law itself: the scaling of process technology. In the current semiconductor roadmap the progress has already slowed down. The transistor density of high-performance microprocessors is likely to increase only by a factor of 25 from 2004 to 2018. A power consumption of 300 Watts is predicted for such a hypothetical chip while the on-chip operating frequency will be in the 50 GHz range.

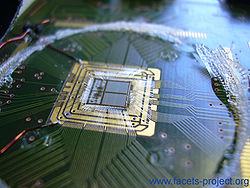

(Spikey, 384 neurons and about 100000 synapses)

(Electronic Vision(s) Group, Heidelberg)

Thus, the only possibility to get a significant gain in simulation speed within the current decade is parallelization of dedicated analog circuits, which implement directly the processes in nerve cells. Dedicated hardware like analog ASICs can be optimized for parallelization. In FACETS' very large scale neural network systems, the cell based calculations will be done using analog models and the communication across medium or long distances using digital (spike-time) coding. With this approach, the final system size is only limited by the available resources and not by physical (signal degradation) or timing limitations.

(Electronic Vision(s) Group, Heidelberg)